With cloud computing, data is typically sent from the devices on the periphery of the IoT to a central server for analysis and some decision making. Then, the results or decisions, including the inferences made by artificial intelligence in some cases, are returned to the originating devices for execution.

However, this model is problematic for a growing number of IoT applications such as off-shore oil and gas drilling. That’s because some of the platforms can be equipped with as many as 35,000 sensors. If data is collected continually and sent back to the cloud for processing, it would consume a very high bandwidth, to the point of saturation. Thus, sending the collected data on a round trip to the central cloud is too slow and too expensive. A secondary, but no less important, concern is that data that’s transmitted becomes vulnerable to tampering.

For these reasons, and a few others, the paradigm is shifting to edge computing, where on-premise servers analyze the data. This model can help decrease delays, costs, and security vulnerabilities that are often typical of massive data transfer.

As business needs change, IoT devices need to acquire additional functionality, modify existing features, or incorporate more devices into the network accordingly. Evolving requirements could also call for more datasets to be integrated into the analytics.

To make these necessary upgrades, IoT device interoperability becomes a necessity. The single-board computers (SBCs) within the IoT devices must be compatible with different operating platforms. And IoT devices must be prepared to operate in harsh or unstable environments, and handle temperature extremes, including significant temperature fluctuations.

Intense Edge Processing

IoT devices collect a lot of data and need to analyze most of this data to make inferences, which is fueling the need for edge compute platforms with more processing capacity and access to greater data storage. In addition, the CPUs need to be ruggedized, as they are exposed to harsh environmental factors such as heat, humidity, shock, and vibration. Moreover, the CPUs need to ensure fast data transmission and minimal latency with high Ethernet connectivity.

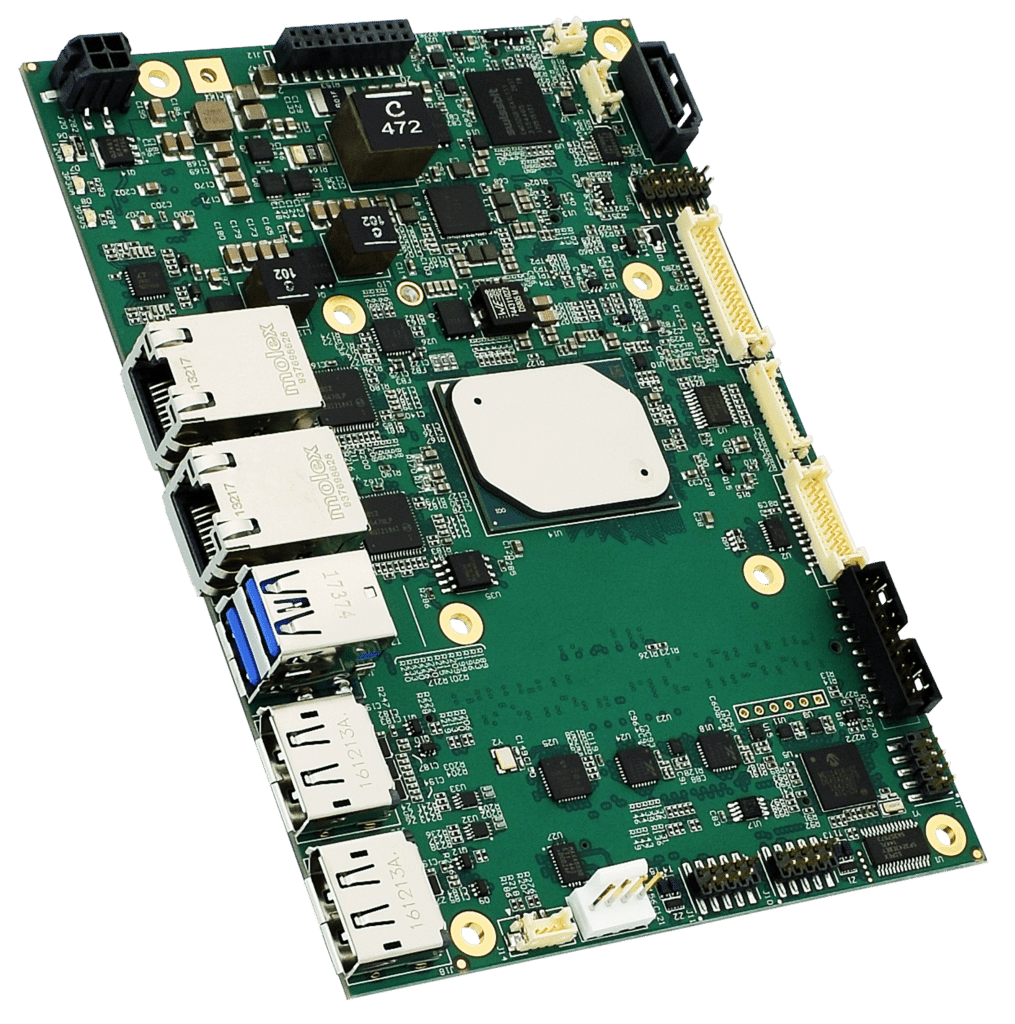

Compactness and power consumption are also factors to consider, depending on the application and the scalability that’s needed. This is where system efficiency comes into play. Filling this need for compactness and low power is the WINSYSTEMS’ SBC35-427 SBC, which comes equipped with a high-performance CPU.

Maximizing Performance and Efficiency

The SBC35-427 is based on Intel’s Apollo Lake-I E3900 series processor, running at up to 2.0 GHz. It is aimed more toward developers looking for extra network bandwidth and CPU/GPU performance.

In terms of ruggedness, this SBC can withstand temperatures from -40°C to +85°C, tolerance for 5% to 95% of non-condensing humidity and are shock and random-vibration-tested. It offers raw processing performance, an Intel GPU, up to 8 GB of memory, in the de-facto 3.5-in. form factor measureing 5.75 by 4.2 in. The SBC35-427 has expansion capability with Mini-PCI Express and a full range of interfaces and is compatible with various operating systems.